The Trump Administration’s National Policy Framework for Artificial Intelligence, released in 2025, is a four-page document that does not mention healthcare once. It focuses on eliminating regulatory barriers to AI development, promoting American AI leadership globally, and reducing federal oversight of AI deployment. What it does not do is establish guardrails for how AI is used in clinical settings, insurance operations, or prior authorization decisions.

For healthcare providers, that absence of guidance matters. Payers are already using AI-driven tools to flag claims for review, generate prior authorization denials, and score providers for audit. Without a federal standard defining when those tools must be explainable, auditable, or subject to human review, providers have limited recourse when an automated denial conflicts with clinical documentation.

This article explains what the framework actually says, how payers are currently deploying AI in ways that affect billing and credentialing, and what providers and billing teams should be watching for as federal policy continues to develop.

TL;DR

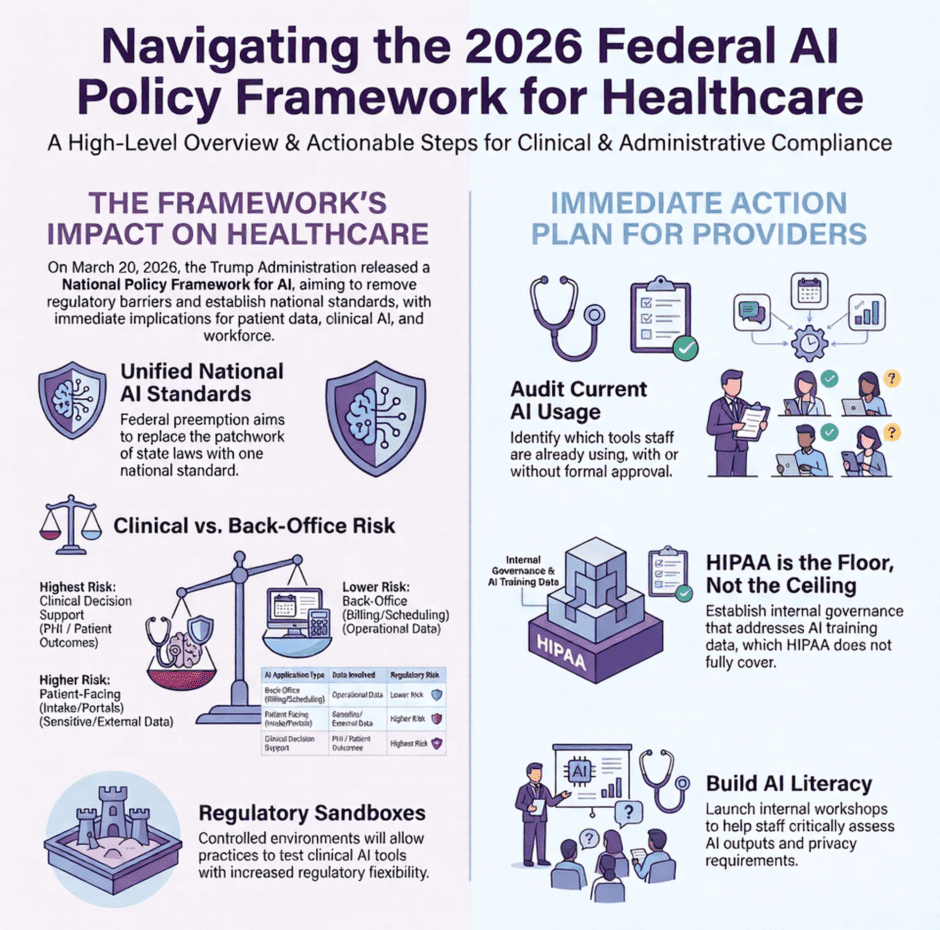

- The National AI Policy Framework is short, high-level, and doesn’t address healthcare directly.

- Patient-facing AI tools carry the most regulatory risk.

- PHI and AI training data remain a legal gray area.

- Federal preemption of state AI laws could be a major win for multi-state health systems.

- Regulatory sandboxes may open the door for faster clinical AI adoption.

- The workforce gap is a problem practices need to start solving right now, not later.

What is the National Policy Framework for Artificial Intelligence?

On March 20, 2026, the Trump Administration released the National Policy Framework for Artificial Intelligence. It’s a federal document that outlines how the United States plans to approach AI development, regulation, and adoption across industries. The goal is to keep America competitive on the global stage while making sure AI is developed and used responsibly.

The framework is short. Four pages, including the title page. It does not lay out specific laws or binding regulations. Instead, it gives Congress a set of priorities and directions to work from as formal legislation gets drafted. Think of it as a policy roadmap rather than a rulebook.

At its core, the framework focuses on four main ideas:

- Removing unnecessary regulatory barriers that slow down AI innovation in the private sector

- Establishing a single national standard for AI to replace the growing patchwork of state-level laws

- Protecting vulnerable populations, including children and seniors, from AI-enabled fraud and harm

- Building AI literacy and workforce readiness through education and apprenticeship programs

What makes this framework notable for healthcare is what it does not say. The word “healthcare” never appears in the document. Neither does “HIPAA,” “clinical,” or “patient.” That silence does not mean healthcare is off the hook. It means every provider, hospital, and healthcare organization has to do the work of figuring out how these broad principles apply to their specific situation. That is not a small task, and the stakes are high.

Not All AI in Healthcare Carries the Same Risk

Before getting into what the framework says, it’s worth making one thing clear. There’s a big difference between how AI is used in the back office versus how it’s used in clinical settings. That distinction matters a lot when you’re thinking about regulatory exposure.

Before getting into what the framework says, it’s worth making one thing clear. There’s a big difference between how AI is used in the back office versus how it’s used in clinical settings. That distinction matters a lot when you’re thinking about regulatory exposure.

Back-office AI, the kind that handles scheduling, billing workflows, prior auth tracking, and provider enrollment, tends to carry less risk. It usually doesn’t touch protected health information directly, and the consequences of an error are typically financial rather than clinical. That’s still important, but it’s a very different category than AI making decisions that affect patient health.

Clinical AI is another story. Tools that support diagnosis, flag high-risk patients, interpret imaging, or guide treatment decisions are operating in a high-stakes environment. They involve PHI, they affect patient outcomes, and they’re going to face the most scrutiny as AI policy matures. Knowing where your tools fall on that spectrum is the starting point for any smart governance strategy.

Where the Framework Actually Hits Healthcare

Patient-Facing AI Is Getting More Attention

The framework specifically calls on Congress to take action against AI-enabled fraud targeting vulnerable populations, including seniors. Right now, that language is aimed at consumer scams. But the door is open for it to extend to healthcare settings, including AI tools used in patient portals, intake workflows, and billing communications.

Patient-facing AI tools sit in the highest-risk category for a reason. They’re external-facing, they handle sensitive data, and the potential for harm, whether through a bad recommendation or a security breach, is significant. Expect the standards for these tools to get tighter as the policy conversation matures. If you’re using AI-driven symptom checkers, patient intake platforms, or digital therapy applications, now is a good time to review how those tools are governed.

PHI and AI Training Data Are Still a Gray Area

The framework makes clear that existing child privacy protections apply to AI systems, including restrictions on collecting data for model training purposes. That raises an obvious question for healthcare. What about PHI?

HIPAA has governed patient data for decades. But it was written long before AI training datasets were part of the picture. The current administration’s approach is largely to let courts sort out the harder questions around training data and fair use. For healthcare organizations, that means more ambiguity in the short term. It also means that building strong internal AI governance policies, ones that can hold up under a range of possible regulatory outcomes, is not optional anymore.

Federal Preemption Could Be a Big Deal for Multi-State Providers

One of the more significant pieces of the framework is its push for Congress to preempt state AI laws that create undue burdens on businesses, with the goal of creating one unified national standard. If you operate across multiple states, you already know how expensive and time-consuming it is to manage a patchwork of different regulatory requirements. A single federal standard for AI would reduce that burden considerably.

That said, states would still keep their authority in a few important areas:

- Enforcing general consumer protection laws as they relate to AI

- Governing how their own agencies use AI in public services

- Protecting children from AI-related harms

- Enforcing HIPAA-adjacent regulations at the state level

So it’s not a blank check for federal control. State-level enforcement, especially around patient protections and consumer rights, is likely to stay in place.

Regulatory Sandboxes Could Speed Up Clinical AI Adoption

The framework recommends that Congress create regulatory sandboxes for AI applications. These are controlled environments where organizations can test AI tools with some degree of regulatory flexibility before a full deployment. For healthcare organizations that have been sitting on the sidelines with higher-risk clinical AI tools because the regulatory path wasn’t clear, this is potentially significant.

The framework recommends that Congress create regulatory sandboxes for AI applications. These are controlled environments where organizations can test AI tools with some degree of regulatory flexibility before a full deployment. For healthcare organizations that have been sitting on the sidelines with higher-risk clinical AI tools because the regulatory path wasn’t clear, this is potentially significant.

Some of the leading health systems have already been running internal pilots using this kind of controlled approach on their own. If the federal government formalizes the concept, expect clinical AI tools that have faced long, uncertain approval windows to move faster. It could open real opportunities for innovation in areas like predictive analytics, clinical decision support, and AI-assisted triage.

The Workforce Gap Won’t Wait for Federal Policy

The framework calls for AI training to be embedded in existing education and apprenticeship programs. That’s a reasonable long-term goal. But it’s a long-term goal. Your practice is dealing with the workforce gap right now.

Studies have shown that clinicians spend more than two-thirds of their time on administrative tasks. AI has genuine potential to change that. However, only if the people using these tools know how to use them well, assess their outputs critically, and operate within your compliance requirements. You can’t train your staff on a policy that doesn’t exist yet.

Here are four things practices can start doing today without waiting for federal guidance:

- Identify which AI tools your staff is already using, with or without formal approval

- Develop a basic AI use policy that covers documentation, privacy, and appropriate use

- Start building AI literacy through short internal training sessions or vendor-led workshops

- Create a feedback loop so clinical and administrative staff can flag concerns or errors with AI tools

The organizations that will benefit most from AI are the ones where staff actually knows what these tools can and can’t do.

What the Framework Doesn’t Answer

The framework is intentionally broad. A few questions critical to healthcare remain completely open.

The framework is intentionally broad. A few questions critical to healthcare remain completely open.

There is no guidance on clinical AI accountability. If an AI-assisted clinical decision contributes to a bad patient outcome, who is responsible? For now, existing regulatory frameworks like FDA 510(k) pathways and software-as-a-medical-device standards are still the reference point. But those weren’t designed with today’s AI capabilities in mind, and the gaps are real.

There’s also no specific treatment of AI in revenue cycle management or insurance, even though algorithmic decision-making in those areas is already generating legal and ethical scrutiny. Automated prior authorization denials, AI-driven claim adjudication, and payer-side tools that affect reimbursement are all areas where a clear federal standard would be helpful. It’s not there yet.

For practices managing billing and contracting, that ambiguity is worth tracking closely. The rules in this space could shift, and practices that are paying attention will be better positioned when they do.

Federal AI Policy FAQ

- Does the National AI Policy Framework apply directly to healthcare organizations?

Not directly. The framework doesn’t mention healthcare by name. But many of its provisions, especially those around data privacy, patient-facing tools, and workforce training, have clear implications for how healthcare organizations should think about AI governance. - What is a regulatory sandbox, and should my practice care about one?

A regulatory sandbox is a controlled environment where organizations can test new technology with some regulatory flexibility before a full deployment. For healthcare, this could mean testing clinical AI tools without going through the full approval process upfront. The concept is still in the proposal stage, but if formalized, it could speed up clinical AI adoption significantly. - Is HIPAA enough to cover AI use in my practice?

HIPAA is a solid foundation, but it wasn’t written with AI in mind. It doesn’t address questions like what happens when patient data is used to train an AI model, or who is responsible when an AI-assisted clinical decision leads to a bad outcome. You should treat HIPAA as the minimum standard, not the whole answer. - How does federal preemption of state AI laws affect my practice?

If Congress passes a unified federal AI law, it could replace the patchwork of state-level AI regulations that are already starting to emerge. For practices operating in multiple states, that would reduce legal and compliance complexity. State-level consumer protection and patient privacy laws would likely stay in place regardless. - What should I do right now if I’m using AI tools in my practice?

Start by documenting what tools you’re using and how. Create a basic internal policy covering privacy, appropriate use, and staff training. Review your vendor contracts for AI-related data handling terms. And make sure there’s human oversight in place for any AI tool that affects patient care.

Providers also Ask

- What does the National AI Policy Framework say about healthcare?

It doesn’t address healthcare specifically. The framework focuses on reducing regulatory barriers to AI innovation, preventing AI-enabled fraud targeting vulnerable populations, and establishing a unified national standard. Healthcare organizations need to interpret and apply the general principles to their own use cases. - Will AI replace healthcare workers?

Not in any near-term scenario. AI is more likely to change what healthcare workers spend their time on than to replace them outright. The bigger near-term shift is AI taking over repetitive administrative tasks, which could free up clinical staff for more direct patient care. - How is AI currently being used in healthcare administration?

AI is being used for prior authorization, claims processing, scheduling, denial management, patient intake, and predictive analytics, among other applications. Some of these are well-established. Others are still in early stages with limited regulatory clarity. - What is the biggest risk of AI in healthcare right now?

In clinical settings, the biggest risk is AI contributing to a bad patient outcome without clear accountability. In administrative settings, the biggest risk is data privacy, particularly around how patient data is handled, stored, or used to train AI models. - How should small practices approach AI governance?

Start simple. Document what AI tools you’re using. Create a basic use policy. Make sure your vendors are HIPAA-compliant. And don’t wait for a federal mandate to start thinking about this. The practices that build governance habits early will have a much easier time adapting when clearer rules arrive.

Summary: Building a Strategy That Works Now

The AI decisions you make in the next one to two years will either set you up well when clearer rules arrive, or leave you scrambling to undo them. Starting with lower-risk use cases is the smarter path. Build competency and confidence in your internal, administrative, and non-PHI workflows first. Then expand into higher-risk clinical applications with the right safeguards in place.

A few principles worth keeping in mind as you build: Document your AI use cases and governance policies now, before regulators ask for them. Build your AI strategy around HIPAA compliance as a floor, not a ceiling. Require physician or clinical oversight for any AI tool that touches patient care decisions. And review vendor contracts to ensure AI tools meet data privacy and security standards before signing.

None of this requires waiting for a final federal law. It requires treating AI governance as a business-critical function.

Co-Founder and COO of Medwave, bringing more than 30 years of hands-on experience in healthcare revenue cycle management, payer contracting, and medical credentialing.